By Jonathan Oh, CEO – SupplyCart / ADAM-Procure

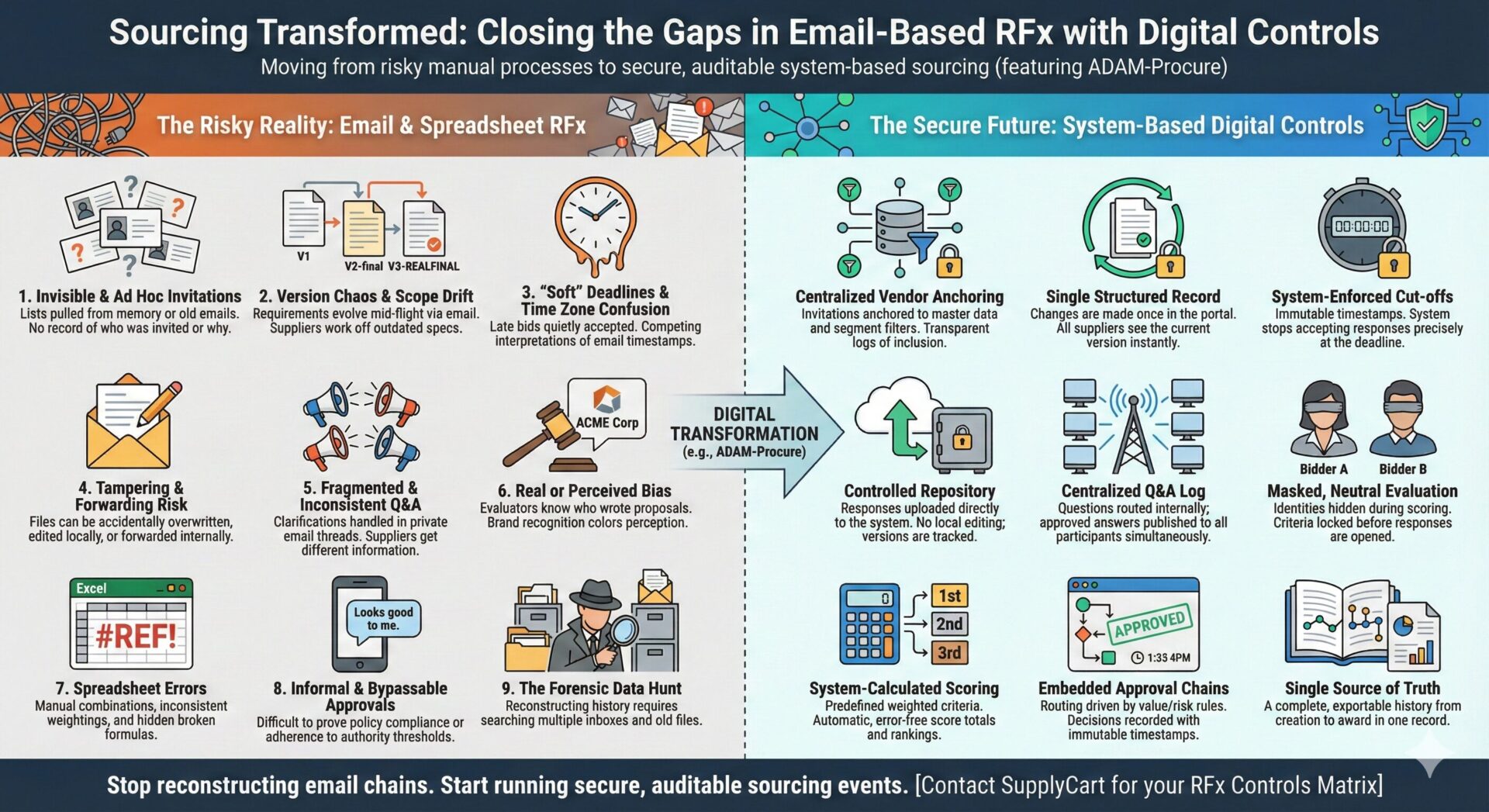

Real-World Sourcing Pitfalls, and the Digital Controls That Fix Them

Why email-based RFx keeps burning teams, and how to close the gaps for good

Most procurement teams can point to at least one sourcing event that “felt fine at the time” but turned into a problem later.

A vendor complains that they were treated unfairly.

An internal audit can’t find a clear trail of how the decision was made.

A stakeholder insists a late bid “should have been considered” because “we always do it that way.”

When you unwind those situations, they nearly always share the same root cause: the RFx ran through email and spreadsheets rather than a controlled system. Shangrong’s “Real World Sourcing Challenges and Best Practices” stories made this painfully clear. The issue isn’t that people are dishonest; it’s that the process is fragile by design.

This article walks through nine common failure modes of email-based RFx, shows the specific digital controls that fix them, and then pulls it together in a before/after narrative you can use with your own stakeholders. The reference model is a system like ADAM-Procure, but the principles are universal.

Invisible invitations and ad hoc vendor lists

The first pitfall appears before the RFx even goes out: who you decide to invite.

In an email-driven world, the category manager often builds the invite list from memory, old inbox searches, and forwarded contacts. There is no central, authoritative record of who was invited, who was left out, and why. Months later, when a supplier asks “why weren’t we approached?” the answer relies on someone’s recollection rather than documented rationale.

A digital vendor master changes that. In ADAM, suppliers are stored with categories, capabilities, regions, and status flags. When you launch an RFx, you filter and select from that pool. The system records which vendors were invited and when. If an excluded supplier challenges you, you can say, “At that point in time, you were not yet approved in the XYZ category,” and actually show the record.

Trust starts with being able to show how the field was set, not just how the race was run.

Version chaos and scope drift mid-stream

Shangrong’s stories about RFx documents quietly mutating will sound familiar to many: one stakeholder emails a late change, another edits their copy and forgets to forward it, a third has an older version buried in a chain.

The result is that different suppliers are working off different versions of the brief. When proposals come back, some have priced for the new requirement, some haven’t, and the evaluation becomes an exercise in guesswork. Updating all bids after the fact is messy; ignoring the inconsistency is worse.

A structured RFx record in ADAM avoids this. There is a single living version of the scope, with a visible change history. If a material change must be made, it is done once; all invited vendors see it in their portal; the system logs who changed what, and when. If the timeline needs to shift to be fair, that is reflected explicitly.

You still have the same business pressures, but the scope of the game is visible and consistent for everyone.

Soft deadlines and the “friendly late bid”

Another classic pitfall is the elastic deadline. In an email process, “close of business Friday” depends on whose clock you’re looking at. One supplier sends a “final” bid a few hours late with a polite apology; another assumes the deadline was strict and doesn’t try. A preferred incumbent might quietly be allowed to submit after everyone else.

Even if the intention is benign, the perception is corrosive.

In a system like ADAM, submission windows are configured upfront. Responses are only accepted during that window; after the cut-off, the system stops accepting new data. Every submission carries an immutable timestamp based on a standardised time setting.

If there’s a genuine reason to consider a late bid, a system outage, a documented error, procurement can override the rule with an explicit exception and approval. That exception becomes part of the RFx record. You move from “we made an exception” to “here is where we made it, and here is who authorised it.”

Tampering risk: editable attachments everywhere

Even when everyone is acting in good faith, email-based submissions are fragile. Proposals arrive as Word, Excel or PDF files attached to emails. They get downloaded, forwarded, sometimes “tidied up” so they’re easier to read. Different versions end up on local drives and shared folders.

From a controls perspective, this is a nightmare. It may never happen in practice, but it is conceptually possible to alter a bid without leaving much trace.

Digital RFx fixes this by changing how responses are captured. In ADAM, suppliers submit directly into the RFx module. They answer structured questions, upload documents into a controlled repository, and lock their responses when they are done. Evaluators, in turn, view these responses from the system, not from forwarded attachments.

It is no longer possible to “tweak” a supplier’s proposal in the shadows; everyone is looking at the same system-stored version.

Fragmented Q&A and uneven clarifications

Nothing undermines fairness faster than asymmetric information. In an email-driven event, suppliers often send questions to whoever they know best, procurement, a technical stakeholder, the business owner. Answers are typed on the fly and sent back one-to-one. Some suppliers get more detailed hints than others.

Shangrong’s examples of vendors interpreting the same requirement in very different ways because of inconsistent Q&A are exactly how protests and disputes start.

A system-based Q&A log changes the dynamics. Vendors submit questions via a portal. Procurement and subject matter experts see the full list, group duplicates, and draft answers. Once approved, those answers are published to every participating supplier. If a clarification materially affects scope or scoring, everyone has equal access to it.

You can still have more nuanced conversations where necessary, but the core game rules are shared transparently, not negotiated privately.

Bias, real, unconscious, or perceived

Even if everything up to this point is clean, evaluation itself is a major failure mode in email-based RFx.

Evaluators receive proposals with logos and branding front and centre. They know who the incumbent is, who has a good reputation, who they had coffee with last week. Criteria might be loosely defined; weighting might be implicit rather than explicit. Comments and partial scores sit in personal spreadsheets or notes.

Later, if a disappointed supplier hints at favouritism, it is very difficult to prove the contrary with anything more than assertions.

Masked evaluation is the control that addresses this. In ADAM, when the scoring phase opens, vendor identities can be hidden. Evaluators see “Bidder A, Bidder B, Bidder C” and the content of their responses, but not the names. They score against criteria and weightings that were locked before responses were opened.

Only once scoring is complete are names revealed and the discussion moves to “who do we award and why?” At that point, it is legitimate to consider incumbency, transition risk, or strategic fit, but the foundational scoring was done on content, not brand.

Just as importantly, the system keeps individual scores and comments with timestamps. If someone later asks, “Did anyone try to manipulate this?”, you can show that scores were entered over a period of days by specific evaluators and then locked, not retrofitted to suit a preferred outcome.

Spreadsheet scoring and the hidden formula error

Shangrong’s deck included a simple but painful example: a sourcing analyst who unknowingly used an outdated scoring template. A single broken formula meant one criterion was double-counted and another ignored. The wrong supplier “won” on paper, and no one realised until much later.

In email- and spreadsheet-based RFx, this is very hard to avoid. Each event spawns its own workbook, often built by copying the last one. Over time, small alterations accumulate; someone hides a column here, changes a weighting there.

A system like ADAM removes the temptation to reinvent scoring each time. Criteria and weightings are defined once in the RFx configuration. Evaluators enter raw scores; the system applies the weights consistently. If you do change a weighting midstream, that change is visible in the configuration history.

Category managers spend their time interpreting what the scores mean, not debugging VLOOKUPs.

Informal approvals and bypassed thresholds

Even after evaluation is complete, email-based workflows introduce another failure mode: approvals.

It’s common to see an evaluation spreadsheet circulated by email with a short note, “Recommend Supplier X; please confirm”, and an approving manager replying “OK” from a mobile device. If your organisation has delegation-of-authority thresholds, cross-functional steering committees, or co-signature requirements, it’s very difficult to demonstrate consistently that they were followed.

When approvals live inside the RFx module, they become part of the record. ADAM can route the award recommendation through a defined chain based on amount, risk level, or category. Approvers see the summary, open the underlying scores if needed, and approve or reject inside the system. Their decision, and any comment, is logged with a timestamp.

Political debates about “who approved this?” are replaced by a much simpler answer: “Here is the approval chain; here are the people who signed it off.”

No single audit trail, and the forensic headache

All of these individual weaknesses boil down to one overarching problem: in an email-first RFx, there is no single place to see the whole story.

If an internal audit, regulator, or senior executive asks, “How did we end up choosing this supplier?”, someone will inevitably spend days digging through inboxes, shared folders and personal notes. Even then, parts of the story will be missing.

In a system like ADAM, each RFx is an object with a life history:

- When it was created, and by whom.

- Which vendors were invited, and when they responded.

- What questions were asked, and how they were answered.

- Which criteria and weightings were applied.

- How each evaluator scored each response.

- What approvals were obtained, and in which sequence.

- When and how the award decision was finalised.

Instead of a forensic reconstruction, audit becomes the simple act of opening that record and reading it end-to-end.

Before and after: one sourcing event, two very different stories

To make this tangible, imagine the same high-value IT outsourcing RFx run two ways.

In the email-based version, the CIO and procurement team send out a PDF brief to five vendors they know well. Over three weeks, the scope changes twice via email. Some vendors pick up the changes; others don’t. Proposals come back in a mix of formats. One key vendor asks for an extension and is quietly allowed to submit a day late. Evaluation happens in a spreadsheet built from the last big RFx; someone tweaks a formula without realising the impact. The final decision is recorded as a short email from the CIO: “Let’s go with Vendor B; they’ve done work for us before.” Six months later, a board member asks, “Why not Vendor C? They seem stronger.” There is no clean story to tell.

In the system-based version, the same event is created in ADAM. Vendors are selected from a defined pool. The scope lives in a single RFx record; changes are logged and reflected to all participants. Deadlines are configured and enforced. Vendors submit responses through the portal. Q&A is handled centrally, with answers shared consistently. Evaluators score masked proposals against locked criteria and weightings. The recommendation is generated from the results and routed through a configured approval chain. The board member asks the same question six months later, and you open the RFx record: “Here are the criteria we agreed, here is how each bidder scored, here is where the CIO and Finance approved the award.” The conversation moves on.

The commercial outcome might be identical, Vendor B may still be the best choice. But the governance story is radically different.

Turning this into a practical plan

Closing these gaps doesn’t mean switching everything on at once. Most organisations start by fixing two or three of the most painful failure modes: perhaps Q&A, deadlines, and scoring. Then they add masking and structured approvals. Over time, email becomes the exception rather than the backbone.

To support that journey, there is a detailed RFx controls matrix (CSV) that maps:

- Each failure mode to the associated risk category (fairness, fraud, reputational, regulatory).

- The specific ADAM controls that address it (masking, timestamps, Q&A workflows, weighted scoring, approval chains).

- Columns for your current state, target state, owner, and planned timeline.

It’s designed to be a working document for procurement, risk and IT to align on what “good” looks like.

👉 Request our RFx controls matrix (CSV) and use it as a blueprint to move your sourcing from fragile email threads to robust, auditable digital controls, without losing the speed and flexibility your stakeholders need.

👉 If you’d like to schedule a session, get in touch and we’ll find a time that works.

https://adam-procure.com/contact-us/

The shift is already underway. Malaysian CPOs are stepping into a bigger mandate, one built on visibility, accountability, and value creation. With the right foundations in place, procurement doesn’t just protect the bottom line; it helps grow the business.

See ADAM in action.

Get started and our friendly team will take care of the rest.

Explore how ADAM can transform your vendor management strategy today.